/ / THEFUTURE /

In this issue: Why flat-rate AI pricing was always too good to last. Danish School of Media and Journalism’s Peder Hammerskov on value, trust, and what AI should never replace. Plus: Six ways to shrink your token bill before someone does it for you.

What we’re talking about: Newer, more capable models use more tokens. And users put whole books, code bases, videos into chats. We all knew where this was going to go: Monthly AI subscriptions are subsidized and don’t cover the cost of compute.

GitHub Copilot had monthly plans starting at $10 that are now being switched to usage-based pricing. “Copilot is not the same product it was a year ago,” the company says, mentioning long-running agents. Which is true, but they’re also the ones changing it.

Customers using OpenAI’s or Anthropic’s API are already familiar with pay-as-you-go billing and used to complain about increased token use with newer models. But there are quite a few offerings for end-users and enterprises alike where you pay monthly. They either have to cut down on requests, raise prices, or burn some more money.

Ed Zitron has a long, angry, told-you-so post about this, calling monthly subscriptions a scam to hide the actual costs, and arguing that AI companies have lured their customers into building things that are ultimately unsustainable in their current form. (And he warns that data centers are now being built largely on hypothetical revenue.)

While some startups and tech companies brag about tokenmaxxing, the rest of us are figuring out which AI tasks can be delegated to smaller, token-efficient models, and how to teach employees that bigger is not always better: reasonable model, not reasoning model.

What else I’ve been reading:

And now: When Peder Hammerskov from Danish School of Media and Journalism called me to ask about our AI initiatives at SPIEGEL for an upcoming talk at Hacks/Hackers AI Journalism Summit, I asked him to share his view on the current state of affairs as well. Thank you, Peder, and see you in Baltimore!

Three Questions with Peder Hammerskov

Peder Hammerskov is Head of Center for AI and Assistant Professor at Danish School of Media and Journalism.

What's the most important question right now?

I think the key question is no longer “How can we use AI?” but “Where can AI help us create more value for users without weakening editorial judgment, trust, and distinctiveness?”

Too much of the conversation is still about speed and output. For newsrooms, the bigger opportunity is relevance, explanation, service, and better use of human expertise.

Are we taking AI seriously enough?

Yes and no. Many media organizations are paying attention, but often in a narrow way; as a tool question, a productivity question, or a fear question.

I think AI should be taken seriously as a leadership and culture question. If you want journalists to use it well, you need psychological safety, clear guardrails, and a strong sense of where human judgment must remain central.

What future are you looking forward to?

I’m looking forward to a future where AI helps journalism become more useful, not just more efficient.

A future where newsrooms use AI to free up time for deeper reporting, stronger explanation, and better service for users, while keeping editorial responsibility where it belongs: With humans.

Hands on: How to reduce your token footprint. A token is a unit of meaning, like a word or part of a word. AI companies bill you for input tokens and for the tokens being generated, the output. For Opus-4.7, a million tokens in is $5, a million tokens out is $25.

If you’re not coding, AI companies usually hide the token counts. A million tokens is like ten novels of text. Which sounds like a lot, but really isn’t, especially not if you’re pushing code or working with media. So what do you do if you have to watch your token use?

- Simple tasks, spell-checking, translation, web search: a smaller model will often get you there just fine. ChatGPT comes with mini and nano versions. Claude Opus has smaller siblings called Haiku and Sonnet. Working with German texts, Gemini-3-Flash ranks higher than GPT-5.4 at a fraction of the cost, and in creative writing it beats Sonnet-4.5. (LLM Leaderboard)

- Start small, go bigger: If the small models don’t deliver, switch to the mid-tier. GPT-5.3, Sonnet-4.6, Gemini-3.1. Try setting the reasoning effort. Only then bring out the premium models.

- Fresh start. Long conversations accumulate context, and the model processes the entire conversation with every new message. New topic, new chat.

- Watch big documents. Pasting in large texts, uploading big files, or referencing them significantly increases the tokens processed per message. Where possible, pull in specific sections rather than the whole thing.

- Tell AI to shut up. Sometimes it’s nice to have a freewheeling conversation, but if you know what you want, tell the machine to cut it: “Be concise. Give only the answer. No intro, no outro, no summary. Use bullets only if necessary.” While it might not reduce hidden reasoning effort, it helps keep the precious output tokens in check.

- Brace for impact: If you’re building a whole new web app for what could have been a short Python script or generating a thirteen-page research based on 65 web sources to tell you where to go for dinner, that’s gonna cost you. Plan accordingly.

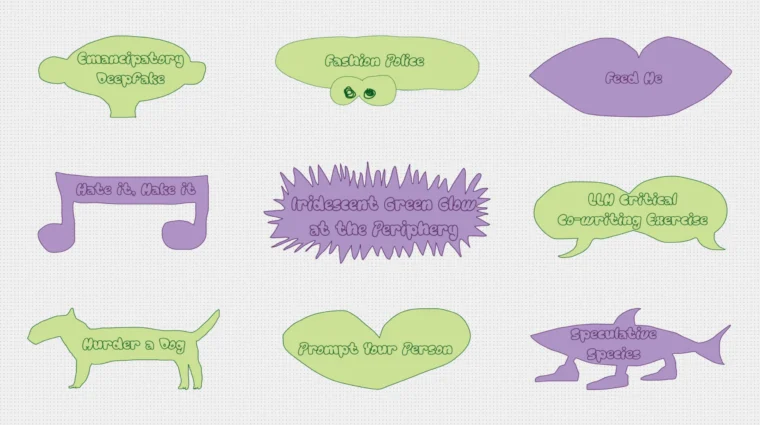

One more thing: From The Hmm, the Responsible IT research group at the Amsterdam University of Applied Sciences, it’s ai, ai, ai: A collection of fun things to do, like training your own image model, fine-tune a small model on gossip, all to help you engage critically with AI and learn a thing or two.

“Rather than seeing AI in extremes—either sparking excitement or sparking fear—these playful creations remind us there are other ways of engaging with the technology.”

This is THEFUTURE.