/ / THEFUTURE /

In this issue: A Hamburg hotel, closed doors, and an AI executive describing a future most companies aren’t building for. Eva Gengler on why AI is a political choice, not a technical destiny. Plus: vibecoding data leaks, and what happens when you give an agent a credit card.

What we’re talking about: At a fancy hotel in Hamburg, behind closed doors, an executive at one of the major AI companies talked about the next couple of months. How AI will become something else entirely.

No longer a chatbot that feels like something out of the 90s, typing into a computer. More like a personal assistant, think OpenClaw, with routines and agents running forever, invisibly in the background. Spinning up interactive dashboards. Talking to you.

How most companies are still building for what AI is and can do today, not what’s coming next. How they still don’t seem to get exponential growth, even after years of it. How more than ever, exclusive data is the actionable moat.

He didn’t just seem to believe this. His organization has the financial backing to make it happen, and a billion users to experiment on. What he doesn’t have, at least for now, is enough compute to release new features, some of which are already built.

Later, at the OMR conference, I teamed up with Niels Rasmussen from public broadcaster NDR to talk, on the record, about clicks, or the lack of them. People rarely, if ever, visit the sources cited in AI answers. More and more publishers are watching their search traffic drop.

For some, including SPIEGEL, Google Discover makes up the difference. For others, it means the collapse of verticals, a rethink of what they offer, and a harder push toward subscribers.

But there’s plenty of room for good partnerships. We in the media are sitting on a treasure the AI systems desperately need for grounding: real-time information. We just can’t sell ourselves short.

What else I’ve been reading:

And now: She explores AI from a feminist perspective. She wrote her PhD about it. And she published a book, “Feministische KI“, in which she lays out how artificial intelligence deepens inequality and what we can do about it. I’d say: it starts with listening to the actual experts. Welcome, Eva Gengler.

Three Questions with Eva Gengler

Eva Gengler is an author, speaker, and co-founder of feminist AI and enableYou. She holds a PhD.

What's the most important question right now?

Are we using AI to automate an oppressive past – or to build a more empowering future?

AI is largely built, governed, and monetized by a small, privileged part of society, while many others are reduced to consumers and data sources. A small, privileged group of white men decide where the future is heading. When we apply AI to existing systems, we risk scaling the injustices already embedded in them. Hiring, lending, policing, the justice system, military technology, all of these already reflect structural inequalities. Automating them does not make them more just.

This is especially dangerous for people who are already marginalized: women, queer people, People of Color, people with disabilities, activists, and those facing multiple forms of discrimination at once.

But AI can also be used differently. It can help us question broken systems instead of optimizing them: fairer recruiting, expose pay and leadership gaps, protect biodiversity, detect misinformation, and assist victims of domestic violence. Technology reflects the objectives we give it, the people who shape it, the data we train it with, and the power structures it serves. AI should be feminist: power-critical, inclusive, and oriented toward justice. Built collectively, not by a few for the many.

What's one fact about AI that everyone should know?

AI systems are trained on human decisions, human language, human institutions, and human histories, so they inherit our biases, our power structures, and our mistakes. When we pretend otherwise, we make its politics invisible and harder to challenge. AI is neither neutral nor objective.

This is not unique to AI. Journalism is not neutral. Scientific studies are not neutral. Everything is shaped by individual, institutional, political, and economic objectives. The question is not whether AI has values. The question is whose values it serves. If AI is built within neo-liberal, neo-colonial, and patriarchal systems, it will reproduce those logics. But this is not inevitable. We can change objectives, institutions, laws, teams, and power relations behind these systems. AI is not a technical destiny. It is a political choice, and now is the time to make a different one.

We need strong legislation, real accountability from management teams, and a broader societal shift in how we understand technology and power

A powerful book unpacking the ideology behind today’s AI and Silicon Valley is “More Everything Forever” by Adam Becker.

What future are you looking forward to?

One in which we learn from the past to build more just, diverse, and inclusive futures, rather than locking in the hierarchies that shaped it.

I want a future that understands people do not start from the same place, and that this shapes our opportunities, privileges, vulnerabilities, and choices. One that treats inequality as a structural reality, not an individual failure. Driven by care for each other and for the planet, rooted in mutual support rather than domination, in justice rather than efficiency, responsibility rather than endless growth.

AI will not be the single answer to this future. No technology can be. But we will also not achieve this future by ignoring AI. The question is whether we use it to repeat and reinforce systems of oppression and privilege or to dismantle them.

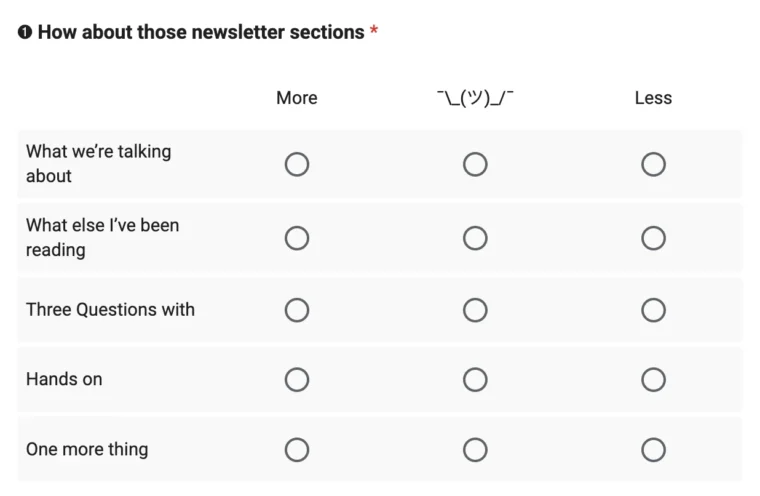

Hands on: Three questions about this very newsletter. I’d love to hear from you. It’s quick, I promise!

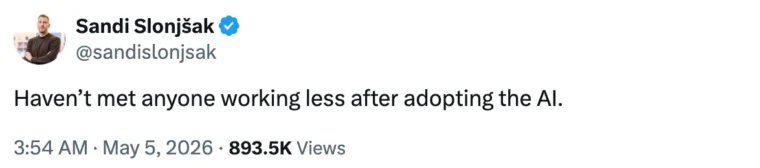

One more thing: I came across this very good post on X.

See also the new glossary entry for Permanent Underclass theory.

This is THEFUTURE.